Scour - February Update

Hi friends,

In February, Scour scoured 647,139 posts from 17,766 feeds (1,211 were newly added). Also, 917 new users signed up, so welcome everyone who just joined!

Here's what's new in the product:

🔮 Inferring Interests from RSS Feeds

If you subscribe to specific feeds (as opposed to scouring all of them), Scour can now infer topics you might be interested in from them. You can click the link that says "Suggest from my feeds" on the Interests page. Thank you to the anonymous user who requested this!

👤 Improved Onboarding

The onboarding experience is simpler. Instead of typing out three interests, you now can describe yourself and your interests in free-form text. Scour extracts a set of interests from what you write. Thank you to everyone who let me know that they were a little confused by the onboarding process.

🔃 Ranking Improvements

I made two subtle changes to the ranking algorithm. First, the scoring algorithm ranks posts by how well they match your closest interest and gives a slight boost if the post matches multiple interests. That was the intended design from earlier, but I realized that multiple weaker matches were pulling down the scores rather than boosting them.

The second change was that I finally retired the machine learning text quality classifier model that Scour had been using. The final straw was when a blog post I had written (and worked hard on!) wasn't showing up on Scour. The model had classified it as low quality 😤. I knew for a while that what the model was optimizing for was somewhat orthogonal to my idea of text quality, but that was it. For the moment, Scour relies on a large domain blocklist (of just under 1 million domains) to prevent low-quality content and spam from getting into your feed. I'm also investigating other ways of assessing quality without relying on social signals, but more on that to come in the future.

⚡ Massive Performance Improvements

I've always been striving to make Scour fast and it got much faster this past month. My feed, which compares about 35,000 posts against 575 interests, now loads in around 50 milliseconds. Even comparing all the 600,000+ posts from the last month across all feeds takes only 180 milliseconds.

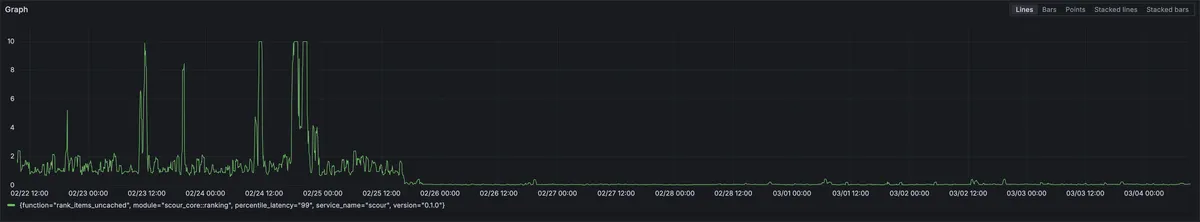

This graph shows the 99th percentile latency (the slowest requests) dropping from the occasional 10 seconds down to under 400 milliseconds (lower is better):

For those interested in the technical details, this speed up came from two changes:

First, I switched from scanning through post embeddings streamed from SQLite, which was already quite fast because the data is local, to keeping all the relevant details in memory. The in-memory snapshot is rebuilt every 15 minutes when the scraper finishes polling all of the feeds for new content. This change resulted in the very nice combination of much higher performance and lower memory usage, because SQLite connections have independent caches.

The second change came from another round of optimization on the library I use to compute the Hamming Distance between each post's embedding and the embeddings of each of your interests. You can read more about this in the upcoming blog post, but I was able to speed up the comparisons by around another 40x, making it so Scour can now do around 1.6 billion comparisons per second.

Together, these changes make loading the feed feel instantaneous, even though your whole feed is ranked on the fly when you load the page.

🔖 Some of My Favorite Posts

Here were some of my favorite posts that I found on Scour in February:

- Scour is built on vector embeddings, so I'm especially excited when someone releases a new and promising-sounding embedding model. I get particularly excited by those that are explicitly trained to support binary quantization like this one from Perplexity: pplx-embed: State-of-the-Art Embedding Models for Web-Scale Retrieval.

- I also spend a fair amount of time thinking about optimizing Rust code, especially using SIMD, so this was an interesting write up from TurboPuffer: Rust zero-cost abstractions vs. SIMD.

- This was an interesting write up comparing what different coding agents do under the hood: I Intercepted 3,177 API Calls Across 4 AI Coding Tools. Here's What's Actually Filling Your Context Window..

- And finally, this one is on a very different topic but has some nice animations that demonstrate why boarding airplanes is slow and shows The Fastest Way to Board an Airplane.

Happy Scouring!

- Evan